Agentic AI promises a shift from systems that simply follow instructions to systems that can evaluate context, make decisions, and act toward defined outcomes. For organizations exploring this next phase of AI maturity, the potential is compelling. Yet many initiatives stall or quietly fail for a simple reason: data readiness for AI is not in place.

Agentic AI does not fail because the technology is immature. It fails because decision-making systems are only as capable as the data they rely on. Without clarity, consistency, and trust in data, autonomy becomes risk rather than advantage.

Understanding What Agentic AI Actually Requires

Agentic AI operates differently from traditional automation and advanced analytics. Instead of responding to predefined triggers, it continuously interprets signals, weighs trade-offs, and takes action within established boundaries. This model fundamentally raises the bar for AI data infrastructure, quality, structure, and governance across the organization.

Agentic AI Depends on Context, Not Commands

Traditional automation works well when rules are stable and outcomes are predictable. Agentic AI, however, evaluates changing conditions. It must understand intent, constraints, priorities, and downstream impact. That understanding comes entirely from data that reflects reality, not assumptions.

If inputs are outdated, inconsistent, or incomplete, the system cannot reliably determine what action best supports the organization’s goals. In those cases, autonomy introduces uncertainty instead of efficiency.

Decision Intelligence Requires Coherent Data Signals

Agentic systems synthesize data across platforms, functions, and timelines. Membership data, financial records, engagement metrics, operational capacity, and policy constraints all influence decisions.

When these signals conflict or lack alignment, the system cannot reason effectively. Instead of enabling data-driven decision-making, it hesitates, escalates unnecessarily, or makes choices that appear disconnected from business priorities.

Why Data Readiness Is Often Overestimated

Many organizations believe they are data-ready because they collect large volumes of information or have invested in modern platforms. In practice, readiness is less about quantity and more about reliability, structure, and shared understanding across teams.

Data Exists, But It Is Not Aligned

In growing organizations, data often lives in multiple systems that evolved independently. Definitions differ. Ownership is unclear. Updates lag behind operational reality.

Agentic AI exposes these gaps quickly. When one system treats a member as active and another flags them as inactive, autonomous decision logic breaks down. Humans compensate for these inconsistencies intuitively. AI cannot.

Historical Data Was Not Designed for Decision Autonomy

Many data environments were built for reporting, not action. They support retrospective analysis but lack the real-time accuracy and contextual metadata agentic systems require.

Without clean state awareness, AI-powered decision systems cannot determine whether conditions warrant intervention or patience. The result is either excessive action or missed opportunities.

The Risk of Autonomy Without Trustworthy Data

Agentic AI magnifies both strengths and weaknesses. When data is reliable, decisions scale smoothly. When data is fragile, failures scale just as quickly. This makes data readiness a governance issue, not just a technical one.

Poor Data Forces Human Oversight Back into the Loop

One of the promises of agentic AI is reduced operational burden. When teams cannot trust system decisions, they reinsert manual checks, approvals, and overrides.

At that point, the organization carries both the cost of AI and the cost of human coordination. AI operational efficiency declines instead of improving.

Inconsistent Data Undermines Organizational Confidence

When AI decisions appear unpredictable, leaders lose confidence in the system. Adoption slows. Teams resist delegation. Innovation stalls.

This is rarely framed as a data problem, but that is where the issue originates. Trust in outcomes begins with trust in inputs.

What Data Readiness Really Means for Agentic Systems

Data readiness is not a single milestone. It is an operating discipline that ensures information is accurate, connected, and decision-relevant across the organization. Agentic AI requires a higher standard because its outputs directly shape outcomes.

Clear Definitions Across the Organization

Every critical data point must have a shared definition. Status fields, lifecycle stages, financial categories, and engagement signals must mean the same thing everywhere they appear.

Agentic systems rely on semantic consistency. Without it, decision logic produces conflicting results even when calculations are technically correct. This is a core responsibility of data governance.

Real-Time or Near-Real-Time Accuracy

Delayed updates are manageable in reporting environments. They are dangerous in autonomous ones.

If a system cannot tell whether a process is already in motion or a constraint has changed, it may duplicate work, escalate unnecessarily, or miss the right moment to act.

Ownership and Accountability for Data Health

Data readiness requires clear stewardship. Someone must be responsible for accuracy, updates, and exceptions.

Agentic AI cannot compensate for ambiguous ownership. When no one owns the data, no one trusts the decisions it enables.

Data Readiness Enables Guardrails, Not Guesswork

Agentic AI should not operate freely. It must act within defined boundaries that reflect organizational priorities, risk tolerance, and ethical considerations. Those guardrails depend entirely on structured, reliable data.

Guardrails Are Data-Driven, Not Abstract

Constraints like budget limits, service thresholds, compliance requirements, and member impact criteria must be encoded into the system.

If those constraints are unclear or inconsistently applied, the AI cannot respect them. Clean data for automation ensures guardrails are enforceable rather than aspirational.

Transparency Requires Explainable Inputs

Leaders need to understand why a system acted the way it did. That explanation traces back to the data signals and thresholds involved.

When data lineage is unclear, explanations feel arbitrary. Trust erodes even when outcomes are technically acceptable.

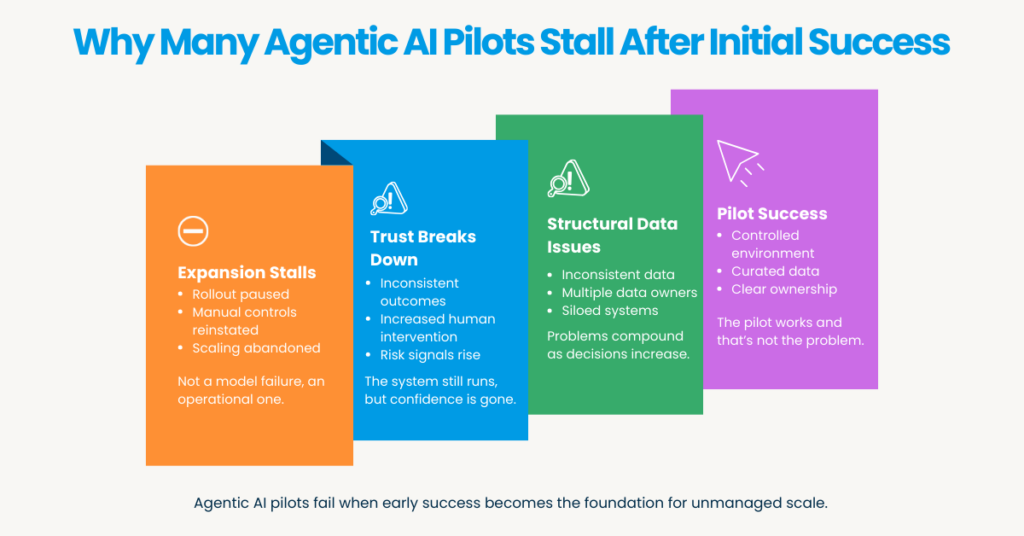

Why Many Agentic AI Pilots Stall After Initial Success

Early demonstrations often look promising because they operate in controlled environments with curated data. Scaling reveals the reality of organizational data complexity.

Pilots Mask Structural Data Issues

Proofs of concept typically rely on clean subsets of data. Once the system interacts with live operations, inconsistencies surface quickly. Without addressing those foundations, expansion becomes risky. Organizations pause rather than proceed.

Scaling Requires Cross-Functional Alignment

Agentic AI does not respect departmental silos. It draws signals wherever they exist. If teams are not aligned on data standards and priorities, autonomy creates friction instead of cohesion. Readiness is as much organizational as it is technical.

Preparing Data for Agentic AI Is a Strategic Investment

Data readiness is often framed as cleanup or maintenance. In reality, it is an investment in decision quality, operational resilience, and leadership clarity.

Strong Data Foundations Support Better Leadership Decisions

When systems produce reliable recommendations and actions, leaders gain confidence in both the technology and the organization’s direction. Time shifts from resolving exceptions to shaping strategy. That shift is where real value emerges.

Technology Should Reinforce Mission, Not Compete with It

Agentic AI works best when it quietly supports outcomes. That only happens when data reflects what the organization truly values. When readiness is in place, technology amplifies impact instead of demanding attention.

Conclusion

Agentic AI is not a layer you add on top of existing systems and hope will adapt. It is a capability that depends on stable, well-governed data infrastructure beneath it.

Organizations that treat data readiness as optional will find autonomy frustrating and fragile. Those that treat it as foundational will unlock systems that genuinely decide, act, and support growth without adding strain.

The future of agentic AI is not defined by algorithms alone. It is defined by the quality of the information we ask those systems to trust.

AuthorBio

Akanksha Negi, Technical Content Writer, Aplusify

With a wealth of experience in content strategy, copywriting, and marketing, Akanksha is an expert in creating clear, compelling content that resonates with audiences. She excels at translating complex, Salesforce-based technical concepts into simple, effective messaging. At Aplusify, she leads content initiatives that drive clarity, build strong connections, and maintain consistency across all communication channels.